OCR Evaluation

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Alfresco Hub

- :

- ACS - Blog

- :

- OCR Evaluation

OCR Evaluation

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

24 Sep 2021

10:51 AM

As part of a recent hackathon I teamed up with Angel Borroy to connect Alfresco Community Edition with a couple of open source OCR applications. The project is available on GitHub. The two OCR applications chosen are OCRmyPDF (version 9.6.0+dfsg) and Apache Tika (version 1.24-full) (both based on Tesseract). OCRmyPDF also contains a number of other tools for cleaning up the PDF (in particular unpaper) and producing a searchable PDF that looks like the original document. Both tools allow for configuring Tesseract itself, but for these tests I've pretty much stuck to their out-of-the-box settings.

I chose to use the start of Alice's Adventures in Wonderland as the test text and ImageMagick to convert this into an image embedded in pdf files. ImageMagick features a large number of options allowing for image manipulation, to test how well the OCR tools coped.

To measure the accuracy of the OCR then I measured the Levenshtein distance between the input and output text. In theory this would be zero distance if the conversion was 100% accurate, but it can be particularly hard to accurately identify certain characters (e.g. whitespace). To get the text output from OCRmyPDF I used the --sidecar option.

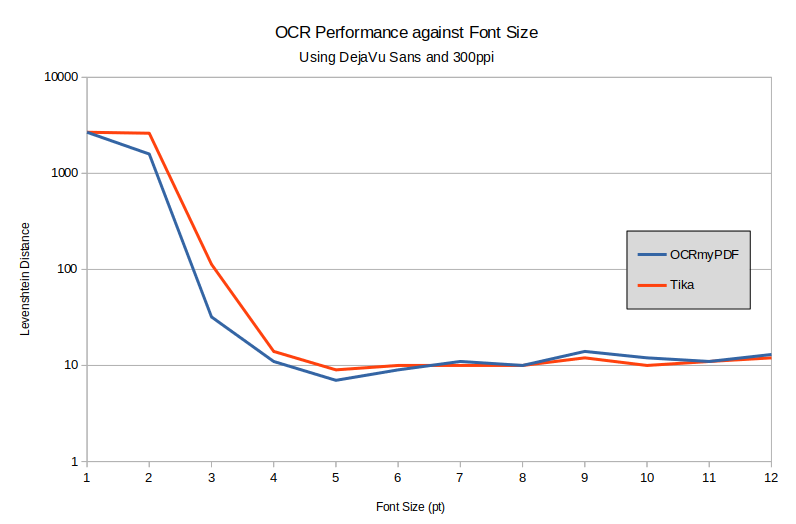

Font Size

I standardised on an A4 PDF at 300ppi with DejaVu Sans. This produced text that was unreadable at 1pt, but could easily be seen at 2pt. However the two OCR applications did not manage as well as a human, but got to their peak performance at about 4pt text. OCRmyPDF performed slightly better than Tika.

Graph showing OCR accuracy against font size

Graph showing OCR accuracy against font size

The Levenshtein distance for a 1pt font was the total number of characters in the source text, and pretty much acts as a limit in all the tests.

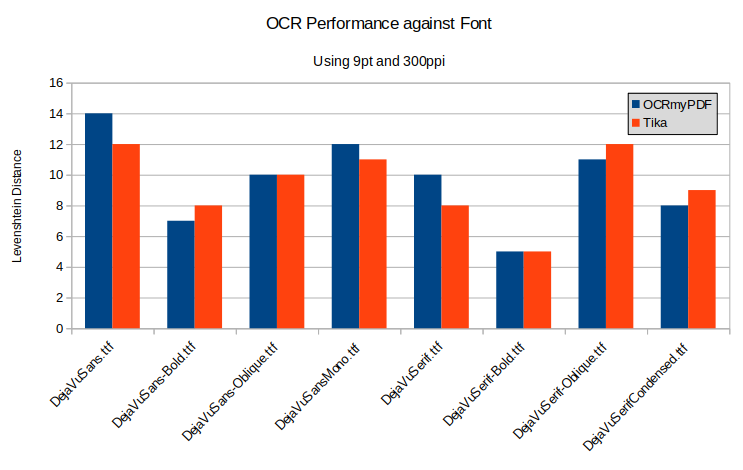

Font

There are an almost unlimited number of fonts which we could test, but I decided to just compare different faces from the DejaVu family. There was very little difference in performance between the two applications in this test, and only a few characters difference between the various fonts.

Graph showing OCR accuracy against font face

Graph showing OCR accuracy against font face

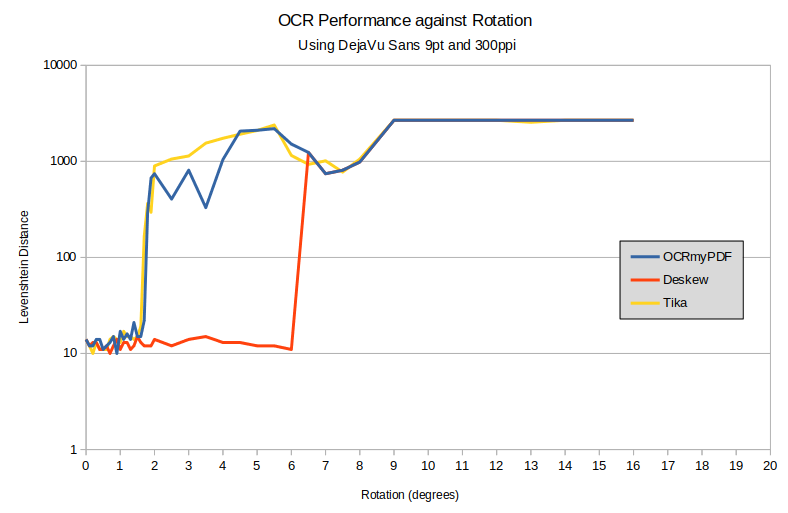

Rotation

It's fairly common for scanned documents or text in photos to be slightly skewed. Here I tested three configurations - the default OCRmyPDF settings, OCRmyPDF with --deskew and Apache Tika. OCRmyPDF also has a --rotate-pages option for when some pages in a pdf are landscape or upside down, but this only works for multiples of ninety degrees. We can see that the deskew option made a huge difference and allowed reading text up to six degrees from the verticle.

Graph showing OCR accuracy against page rotation

Graph showing OCR accuracy against page rotation

One nice feature of OCRmyPDF was that it produced an output pdf that had the page rotated so that the text was the correct orientation.

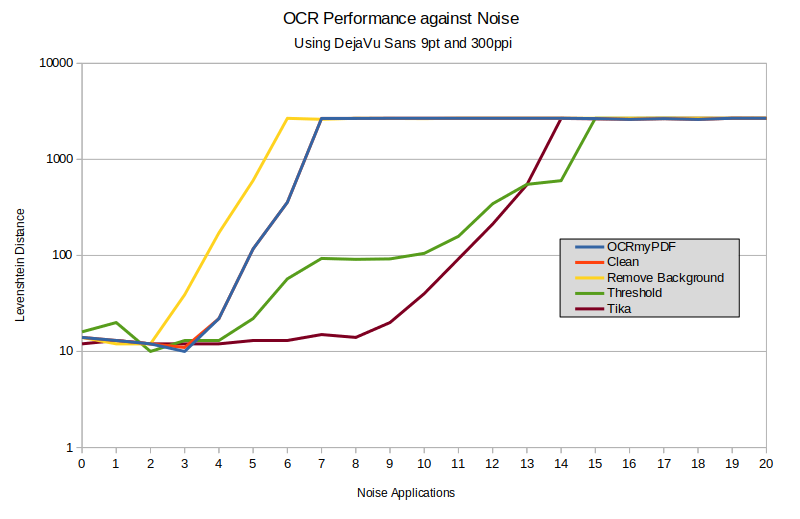

Noise

I tested this by adding noise using the +noise Gaussian option from ImageMagick. I found that a single application of noise made little difference to the results, so tested against repeated applications. Although the noise itself is random, I used the same set of documents for each test The document with ten applications of noise looked like this:

Example text after ten applications of noise

Example text after ten applications of noise

OCRmyPDF provides a few useful options to improve the extraction of text from an image. The first --clean calls through to a tool called "unpaper" which tries to remove artifacts from scanning. The second --remove-background is intended for removing a background image from a document. The third option that I tested was --threshold which is an experimental option to convert every pixel to black or white before sending the image to Tesseract.

Although Gausian noise is easy to generate, it's not the same noise that is typically seen on scanned documents, so these results should be taken with a grain of salt. Overall Tika came out with better results than any of the OCRmyPDF options I tested. The clean option didn't seem to make any difference at all - the results were almost exactly the same as default settings for OCRmyPDF. The remove background option actually made things worse - presumably because the noise was applied to the foreground (text) and to the background. The threshold option improved the results significantly and even provided better accuracy than Tika for certain noise levels.

Graph showing OCR accuracy against noise level

Graph showing OCR accuracy against noise level

ImageMagick provides various methods of generating noise, but all of them seemed to give similar results.

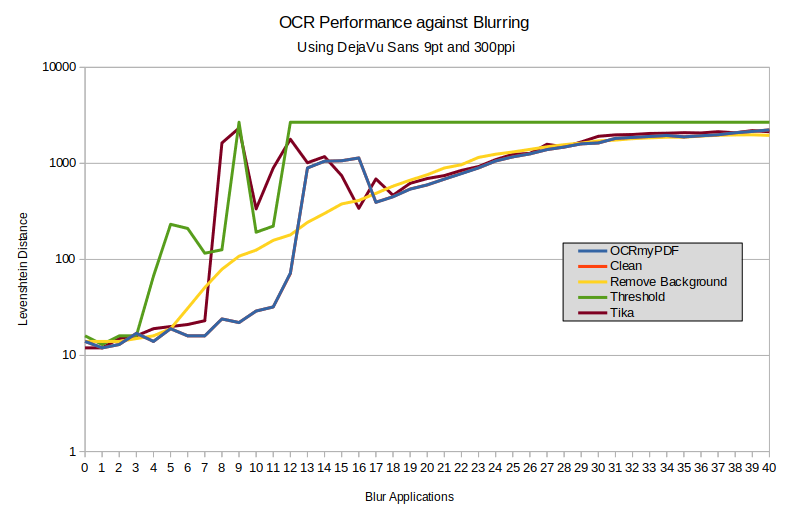

Blur

Using iterations of the option -blur 100 from ImageMagick I produced various blurry documents. With ten applications of noise the text looked like this:

Example text after ten blur applications

Example text after ten blur applications

I tested the OCR fidelity using the same set of options as for the noise test. Overall OCRmyPDF performed better than Tika, and generally the default options gave a more accurate scan than anything else. For a certain amount of blur then the remove background option gave the best results. The clean option again gave the same results as the default settings.

Graph showing OCR accuracy against blurring

Graph showing OCR accuracy against blurring

Conclusions

OCRmyPDF and Tika are both based on Tesseract, but their default settings performed best under different circumstances. While both tools provide the option to tinker with Tesseract's configration, OCRmyPDF provides easy access to some simpler settings that can improve fidelity of OCR. Additionally OCRmyPDF provides the ability to easily generate an output PDF containing text in the appropriate places. Both projects are under active development, so it's worth testing the latest versions for any improvements.

Finally, if you're interested in using OCR with Alfresco Community Edition then have a look at the Alfresco OCR Transformer on GitHub.

5 Comments

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Alfresco Content Services Blog

Ask for and offer help to other Alfresco Content Services Users and members of the Alfresco team.

Related links:

Latest Articles

- Join the Alfresco TechQuest Hack-a-thon 2024: Inno...

- History of Alfresco Versions

- Alfresco in the OpenSearchCon Europe 2024

- Alfresco Community Edition 23.2 Release Notes

- Decommissioning of Alfresco SVN Instances

- Summarization of textual content in Alfresco repos...

- ACS containers and cgroup v2 in ACS up to 7.2

- Migrating from Search Services to Search Enterpris...

- Alfresco Community Edition 23.1 Release Notes

- Integrating Alfresco with GenAI Stack

- Achieving Higher Metadata Indexing Speed with Elas...

- ACA Extension Development Javascript-Console & Nod...

- Hyland participation in DockerCon 2023

- Alfresco repository performance tuning checklist

- The Architecture of Search Enterprise 3

We use cookies on this site to enhance your user experience

By using this site, you are agreeing to allow us to collect and use cookies as outlined in Alfresco’s Cookie Statement and Terms of Use (and you have a legitimate interest in Alfresco and our products, authorizing us to contact you in such methods). If you are not ok with these terms, please do not use this website.