Replication service on a 4.2f version

- Alfresco Hub

- :

- ACS - Forum

- :

- Re: Replication service on a 4.2f version

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Replication service on a 4.2f version

Hello,

I have a number of questions about the replication jobs.

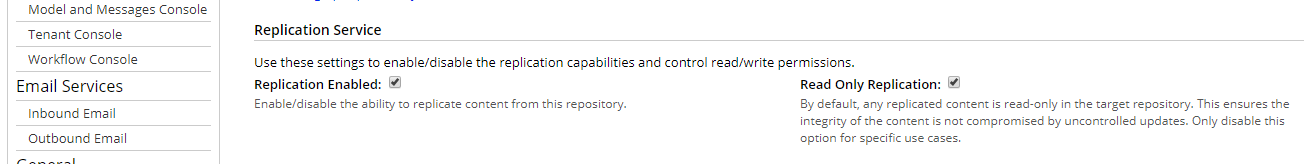

- By default the target content will be read-only. Is there a way to change that ?

- Si the following link still recommended ?

https://community.alfresco.com/docs/DOC-6088-ftr#w_filesystemtransferreceiver28ftr2ffstr29

- Is the first step documented in the 4.2 doc is mandatory ?

Configuring Share to open locked content in the source repository | Alfresco Documentation

Thanks in advance for your ideas.

Cheers

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

My customers have had a terrible experience with the replication service. Are you able to elaborate on how you are planning on using it? Maybe there is a more robust alternative.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

Agreed with Jeff.

Have used replication service for both

a) FSTR - File system transfer receiver(from alfresco to file system) .

b) Alfresco replication service - (From one alfresco to other alfresco)

On admin console - we have an option to disable Read only replication, by default it is selected

For FSTR - i had tried to disable it , but if you try to update the content on source and then try to replicate it will have an issue and junk file(.ftr something ) will be formed. So it should be read only , and basically one way replication.

Also it is very very slow - takes lot of time. On FSTR side also you have a file system with executable jar and internal db which gets updated in the process , taking lot of time.

Had issues with linking module.

For alfresco replication service -

a) Very very slow -

b) Lots of timeouts , since if you have a web proxy above tomcats - the socket will is most likely to be closed as per the configuration.

c) Applying the ssl and doing replication over ssl is also very difficult and very very slow.

Just to add, there are faster approaches

We used Goodsync tool and got the content synced at regular intervals via webDAV . Were able to sync effectively onto filesystem using Goodsync tool.

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

Thank you Guys.

The idea was to replicate the content of a part our production server to a "form" server used for tests only. Only a part because just the financial documents stored into a unique folder and classified into an arborecence. About 200 000 documents. We just need to keep the same IUD/noderef and be able to modify the replicated content.

So if I understand your thought the replication mechanism is not recommended !

What would you recommend as alternative ?

Thanks again

Cheers

Guillaume

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

Hi,

We won't use replication services ![]()

Using Goodsync tool or webdav sync we will loose the UID ?

have you ever used ACP ? Is a tutorial available ?

Thanks

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

Hi ,

Yes Goodsync would just the contents and sync the contents as per the contents at the source.

- Goodsync was used when syncing is important at regular scheduled intervals - but UUID was not available at destination. It is just a sync tool like winscp with better capabilities on scheduling and also from different trype of sources like S3 etc .

Not aware of ACP .

Jeff is the best person i am aware of who can help as per your scenario. I just though to share some experience with Replication service and also if you have a matching usecase can try this.

Best of luck...

- Mark as New

- Bookmark

- Subscribe

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Replication service on a 4.2f version

Do you need to sync 200,000 documents or are you syncing only a few documents and NOT syncing 200,000 documents? If you are syncing all 200,000 you will definitely not use the replication service.

Also, 200,000 is probably too many docs to put in an ACP file although it depends on how big the documents are. If you want to use ACP you will probably have to do many smaller batches.

I recommend an asynchronous approach. At a very very high-level, you can put messages on a queue or an event stream and then have an integration server that is monitoring the queue/stream so that it can replicate the changes to another server. I've done this with ActiveMQ. I wrote a behavior to watch for the changes I'm interested in. The behavior then puts a message on a queue that essentially says, "This object changed". Then, over in my other Alfresco server, I have a listener that is subscribed to the queue. When it sees a message it grabs the node reference from the message then calls the source Alfresco server to fetch the object and persist it into the repo.

Similarly, when an object is deleted, a message goes on the queue, then the target server sees that message and deletes the object on the target server.

In my example, I didn't care about node refs changing between the two servers. I just stored the originating node reference as a property on the target server so I could track back to the source.

In your case, instead of fetching the object you could trigger an export into an ACP file, then on the target server, import the ACP to preserve the node reference.

My example does one-way sync, which it sounds like would work for you. The folks at Parashift have a product that does two-way synchronization leveraging event streams and Apache Camel. Here is a blog post they wrote about it: Stream Processing with Alfresco – Parashift . They did a presentation at DevCon in Zaragoza but I can't seem to find that presentation at the moment.

If you want to try using streams instead of queues, you might take a look at a project I have on github that writes events to Apache Kafka from a behavior. It's just something I was playing with so it is not production-hardened code.

Anyway, I hope that gives you some useful ideas.

Ask for and offer help to other Alfresco Content Services Users and members of the Alfresco team.

Related links:

- Where is the file that contains the JMS configurat...

- How to know the folder which triggered action

- Problem Size: Converting Document at PDF/A (label....

- How to call search api from surf webscript (share ...

- How to remove alfresco default properties for any ...

- Syntax for searching datetime property in Postman

- Full Text Search in Community 7.x

- Filtering people according mail

- People dashlet

- Manage rules in the alfresco 7.4 community I Need ...

- Alfresco Community v4.0 (2012), Ubuntu 12.04, Mysq...

- Metadata extraction not working

- Enterprise Pricing 2024

- Reference Architecture for 23.x

- Endpoint liveness/readiness probes for Alfresco Se...

We use cookies on this site to enhance your user experience

By using this site, you are agreeing to allow us to collect and use cookies as outlined in Alfresco’s Cookie Statement and Terms of Use (and you have a legitimate interest in Alfresco and our products, authorizing us to contact you in such methods). If you are not ok with these terms, please do not use this website.