Amazon DynamoDB Integration Using Data Models - Process Services

- Alfresco Hub

- :

- APS & Activiti - Blog

- :

- Amazon DynamoDB Integration Using Data Models - Pr...

Amazon DynamoDB Integration Using Data Models - Process Services

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

This is a continuation of my previous blog post about data models Business Data Integration made easy with Data Models. In this example I'll be showing the integration of Alfresco Process Services powered by Activiti (APS) with Amazon DynamoDB using Data Models. Steps required to set up this example are:

- Create Amazon DynamoDB tables

- Model the Data Model entities in APS web modeler

- Model process components using Data Models

- DynamoDB Data Model implementation

- App publication and Data Model in action

Let’s look at each of these steps in detail. Please note that I’ll be using the acronym APS throughout this post to refer to Alfresco Process Services powered by Activiti. The source code required to follow the next steps can be found at GitHub: aps-dynamodb-data-model

Create Amazon DynamoDB tables

As a first step to run this sample code, the tables should be created in Amazon DynamoDB service.

- Sign in to AWS Console https://console.aws.amazon.com/

- Select "DynamoDB" from AWS Service List

- Create Table "Policy"-> (screenshot below)

- Table name : Policy

- Primary key : policyId"

- Repeat the same steps to create another table called "Claim"

- Table name : Claim

- Primary key : claimId

Now you have the Amazon DynamoDB business data tables ready for process integration.

Model the Data Model entities in APS web modeler

Next step is to model the business entities in APS Web Modeler. I have already built the data models and they are available in the project. All you have to do is to import the "InsuranceDemoApp.zip" app into your APS instance. Please note that this app is built using APS version 1.6.1 which will not get imported in older versions. If you are using APS version 1.5.X or older, please import the app from my project I used in my previous blog post.

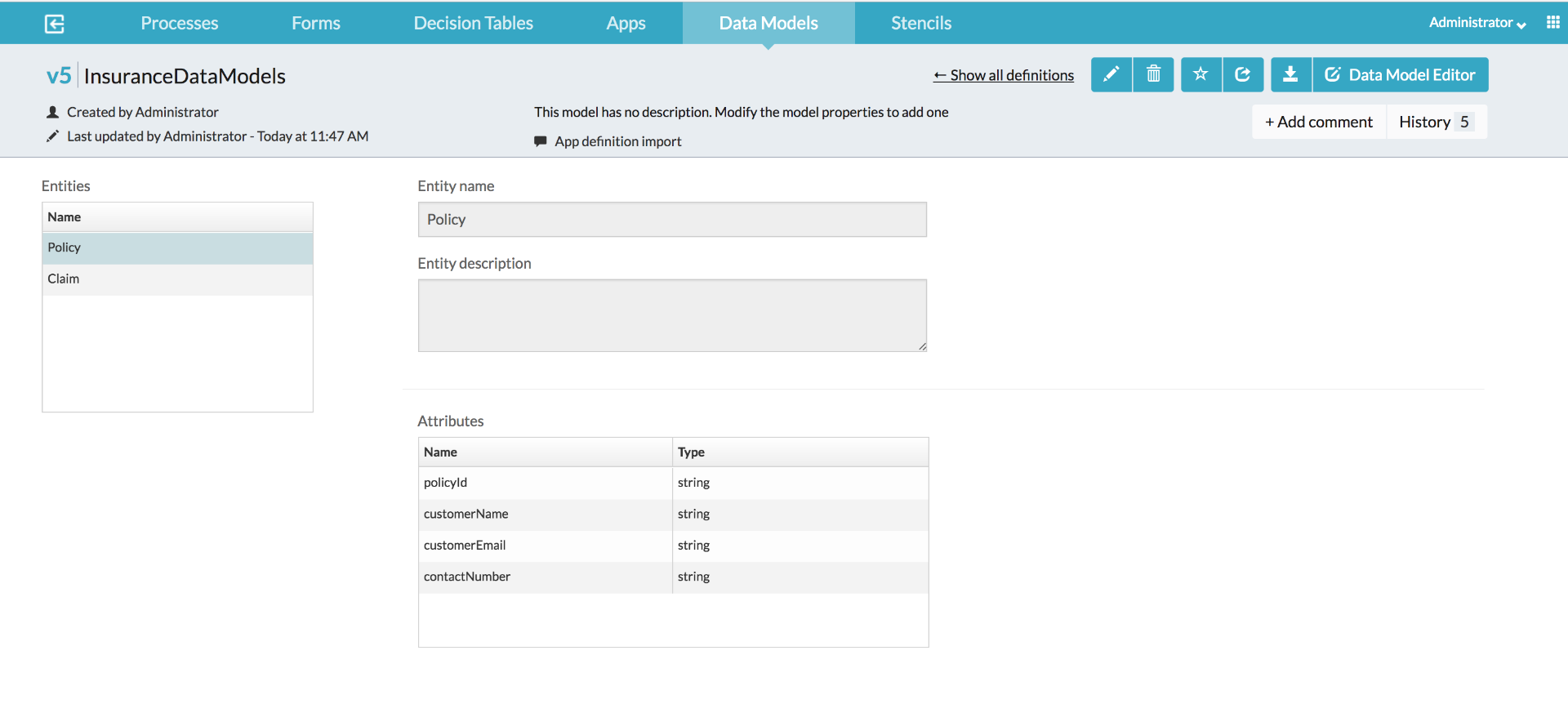

Once the app is successfully imported, you should be able to see the data models. A screenshot given below.

Model processes components using Data Models

Now that we have the data models available, we can now start using them in processes and associated components such as process conditions, forms, decision tables etc. If you inspect the two process models which got imported in the previous step, you will find various usages of the data model entities. Some of those are shown below:

Using Data Model in a process model

Using Data Models in sequence flows

Using Data Model in Forms

Using Data Models in Decision Tables (DMN)

Let’s now go to the next step which is the implementation of custom data model which will do the communication between process components and Amazon DynamoDB

DynamoDB Data Model implementation

In this step we will be creating an extension project which will eventually do the APS<-->Amazon DynamoDB interactions. You can check out the source code of this implementation at aps-dynamodb-data-model . For step by step instructions on implementing custom data models, please refer Activiti Enterprise Developer Series - Custom Data Models. Since you need a valid licence to access the Alfresco Enterprise repository to build this project, a pre-built library is available in the project for trial users - aps-dynamodb-data-model-1.0.0-SNAPSHOT.jar. Given below are the steps required to deploy the jar file.

- Create a file named "aws-credentials.properties" with the following entries and make it available in the APS classpath

aws.accessKey=<your aws access key>

aws.secretKey=<your aws secret key>

aws.regionName=<aws region eg:us-east-1>

- Deploy aps-dynamodb-data-model-1.0.0-SNAPSHOT.jar file to activiti-app/WEB-INF/lib

App publication and Data Model in action

This is the last step in the process where you can see the data model in action. In order to execute the process, you will need to deploy (publish) the imported app first. You can do it by going to APS App UI -> App Designer -> Apps → InsuranceDemoApp → Publish

Once the process and process components are deployed, you can either execute the process by yourselves and see it in action OR refer to video link in Business Data Integration made easy with Data Models demonstrating data model.

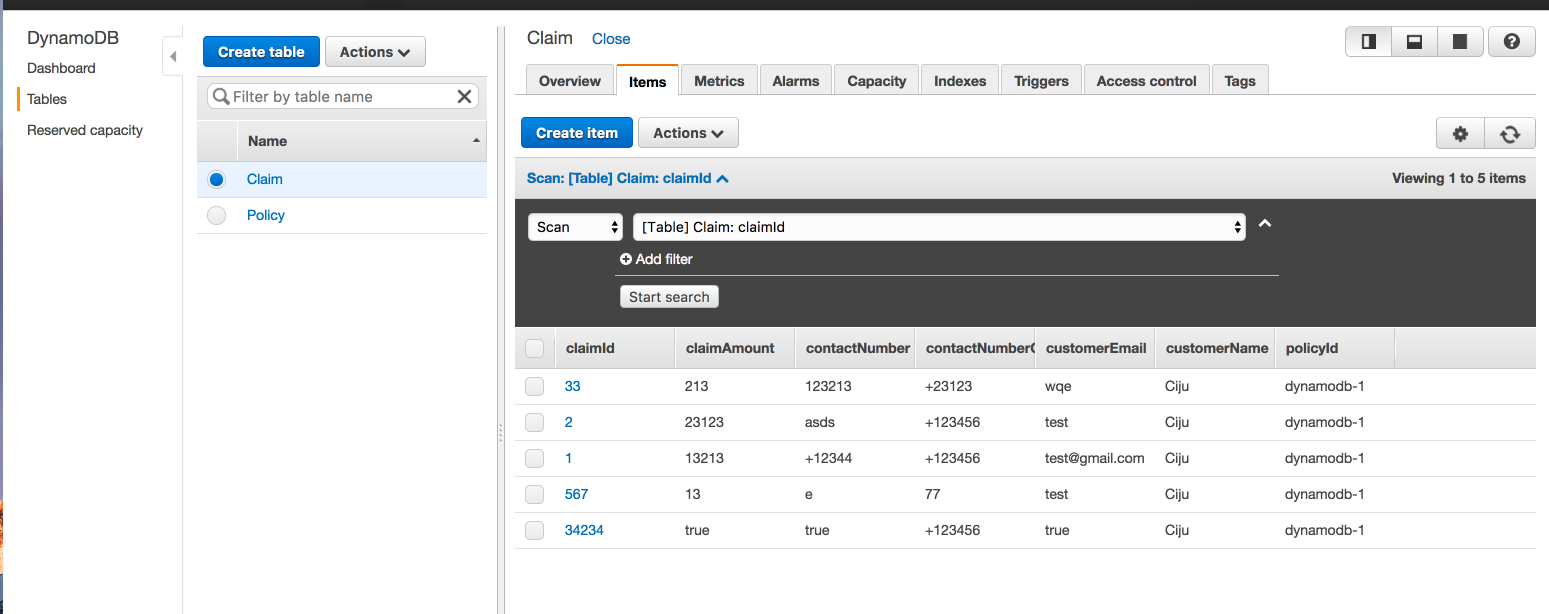

Once you run the processes, log back in to AWS Console https://console.aws.amazon.com/ and check the data in respective tables as shown below

That’s all for now. Again, stay tuned for more data model samples….

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.

Blog posts and updates about Alfresco Process Services and Activiti.

- Activiti Cloud 7.1.0.M2 released!

- Alfresco Process Services SDK Maven Module

- Activiti Updates, Just in Time for Summer

- New OSS challenges ahead

- Activiti Cloud 7.1.0.M1 released!

- Activiti: Last week Dev Logs #87

- Activiti: Last week Dev Logs #86

- Activiti Cloud SR1 Released!

- Activiti: Last week Dev Logs #85

- Activiti: Last week Dev Logs #84

- Activiti: Last week Dev Logs #83

- Activiti Cloud 7.0.0.GA Released

- Activiti: Last week Dev Logs #82

- Activiti: Last week Dev Logs #81

- Activiti Cloud @ DevCon 2019

We use cookies on this site to enhance your user experience

By using this site, you are agreeing to allow us to collect and use cookies as outlined in Alfresco’s Cookie Statement and Terms of Use (and you have a legitimate interest in Alfresco and our products, authorizing us to contact you in such methods). If you are not ok with these terms, please do not use this website.